seospider files in database storage mode as well, which will take time to convert to a database (in the same way as version 11) before they are compiled and available to re-open each time almost instantly.Įxport and import options are available under the ‘File’ menu in database storage mode.

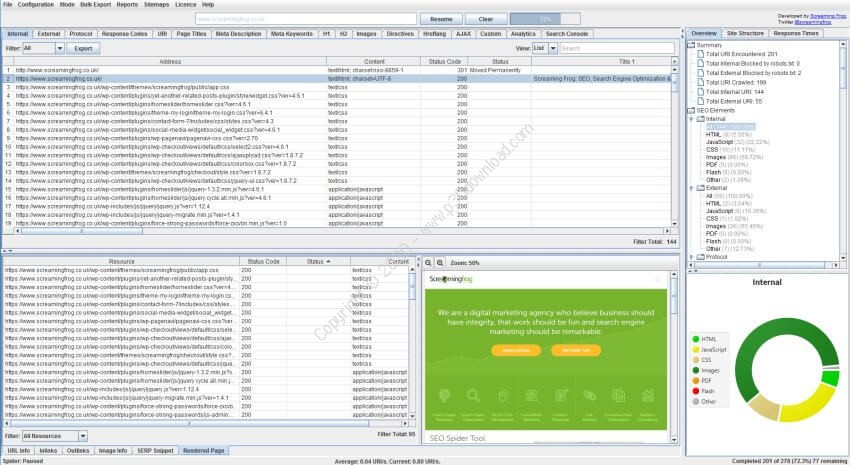

seospider file for anyone using memory storage mode still. You can export the database crawls to share with colleagues, or if you’d prefer export as an. But it does mean you will need to delete crawls you don’t want to keep from time to time (this can be done in bulk). You also don’t need to save anymore, crawls will automatically be committed to the database. Database opening is significantly quicker, often instant. seospider files anymore, which previously could take some time for very large crawls. seospider crawl file in database storage mode. The main benefit of this switch is that re-opening the database files is significantly quicker than opening an. The ‘Crawls’ menu displays an overview of stored crawls, allows you to open them, rename, organise into project folders, duplicate, export, or delete in bulk. seospider file), they will automatically be saved in the database and can be accessed and opened via the ‘File > Crawls…’ top-level menu. In database storage mode, you no longer need to save crawls (as an. Last year we introduced database storage mode, which allows users to choose to save all data to disk in a database rather than just keep it in RAM, which enables the SEO Spider to crawl very large websites.īased upon user feedback, we’ve improved the experience further. 2) Database Storage Crawl Auto Saving & Rapid Opening Google are aware of the issue and we have included an FAQ on how to set-up an exclude filter to prevent it from inflating analytics data. Please note, using the PageSpeed Insights API (like the interface) can affect analytics currently. There’s also a very cool ‘PageSpeed Opportunities Summary’ report, which summaries all the opportunities discovered across the site, the number of URLs it affects, and the average and total potential saving in size and milliseconds to help prioritise them at scale, too.Īs well as bulk exports for each opportunity, there’s a CSS coverage report which highlights how much of each CSS file is unused across a crawl and the potential savings. There are 19 filters for opportunities and diagnostics to help identify potential speed improvements from Lighthouse.Ĭlick on a URL in the top window and then the ‘PageSpeed Details’ tab at the bottom, the lower window populates with metrics for that URL, and orders opportunities by those that will make the most impact at page level based upon Lighthouse savings.īy clicking on an opportunity in the lower left-hand window panel, the right-hand window panel then displays more information on the issue, such as the specific resources with potential savings.Īs you would expect, all of the data can be exported in bulk via ‘ Reports‘ in the top-level menu. In the PageSpeed tab, you’re able to view metrics such as performance score, TTFB, first contentful paint, speed index, time to interactive, as well as total requests, page size, counts for resources and potential savings in size and time – and much, much more. (The irony of releasing pagespeed auditing, and then including a gif in the blog post.) You’re able to choose and configure over 75 metrics, opportunities and diagnostics (under ‘Config > API Access > PageSpeed Insights > Metrics’) to help analyse and make smarter decisions related to page speed. The great thing about the API is that you don’t need to use JavaScript rendering, all the heavy lifting is done off box. The field data from CrUX is super useful for capturing real-world user performance, while Lighthouse lab data is excellent for debugging speed related issues and exploring the opportunities available. We’ve introduced a new ‘PageSpeed’ tab and integrated the PSI API which uses Lighthouse, and allows you to pull in Chrome User Experience Report (CrUX) data and Lighthouse metrics, as well as analyse speed opportunities and diagnostics at scale.

You’re now able to gain valuable insights about page speed during a crawl. 1) PageSpeed Insights Integration – Lighthouse Metrics, Opportunities & CrUX Data

For version 12, we’ve listened to user feedback and improved upon existing features, as well as introduced some exciting new ones. In version 11 we introduced structured data validation, the first for any crawler. We are delighted to announce the release of Screaming Frog SEO Spider version 12.0, codenamed internally as ‘Element 115’.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed